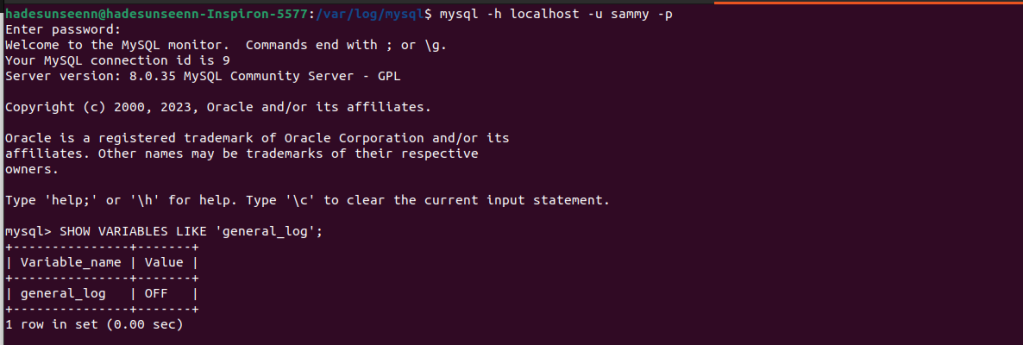

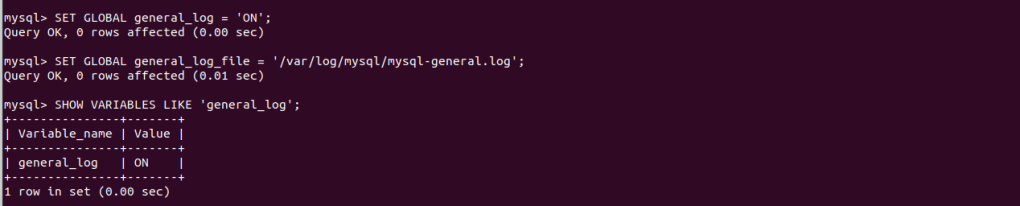

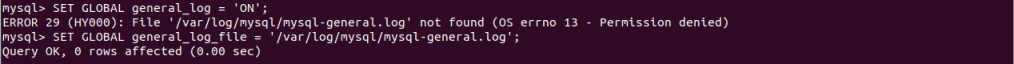

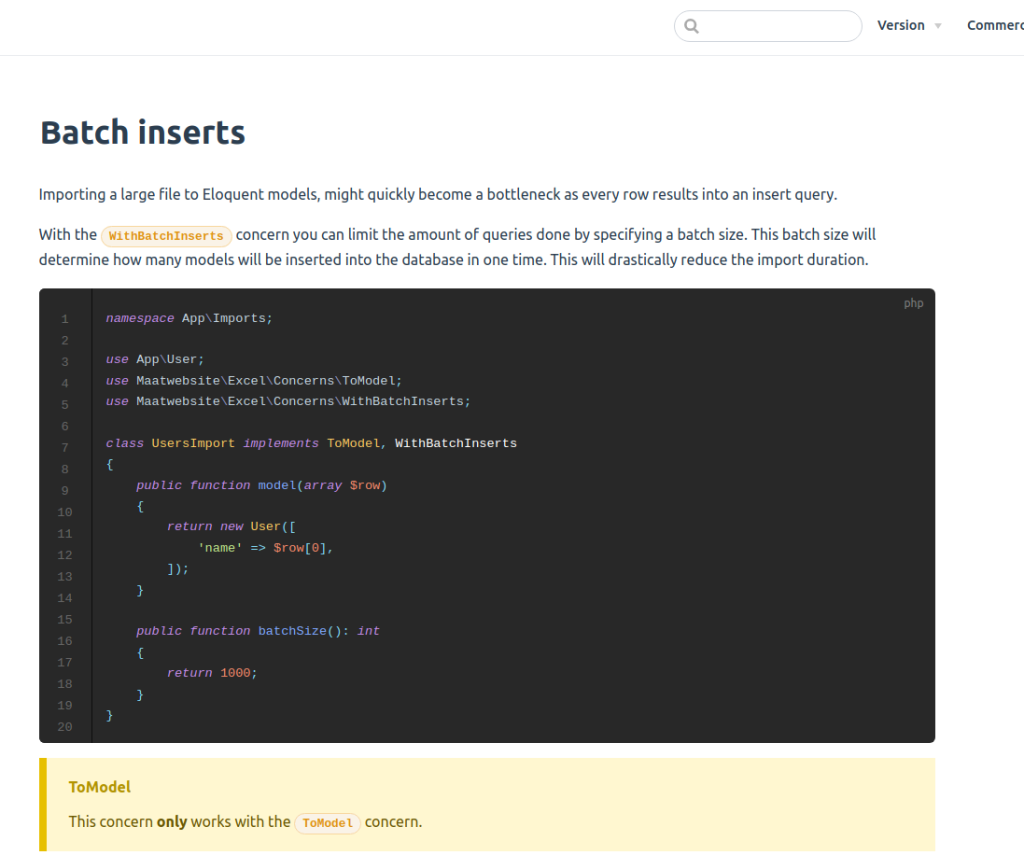

I’ve been using Laravel Excel for about a year now, and it’s been really helpful for importing large CSV files into my database. However, I wasn’t entirely sure how their batching feature works. So, I’ve been looking into it using the information I found in the MySQL log file. Here they explained how we can implement batching.

Used Laravel version 9 and Laravel Excel version 3.1

Initially, I assumed that if I have 10,000 records and my batch size is set to 1000, it would result in only 10 insert queries to the database. However, after examining the MySQL logs, I realized that this is not the case.

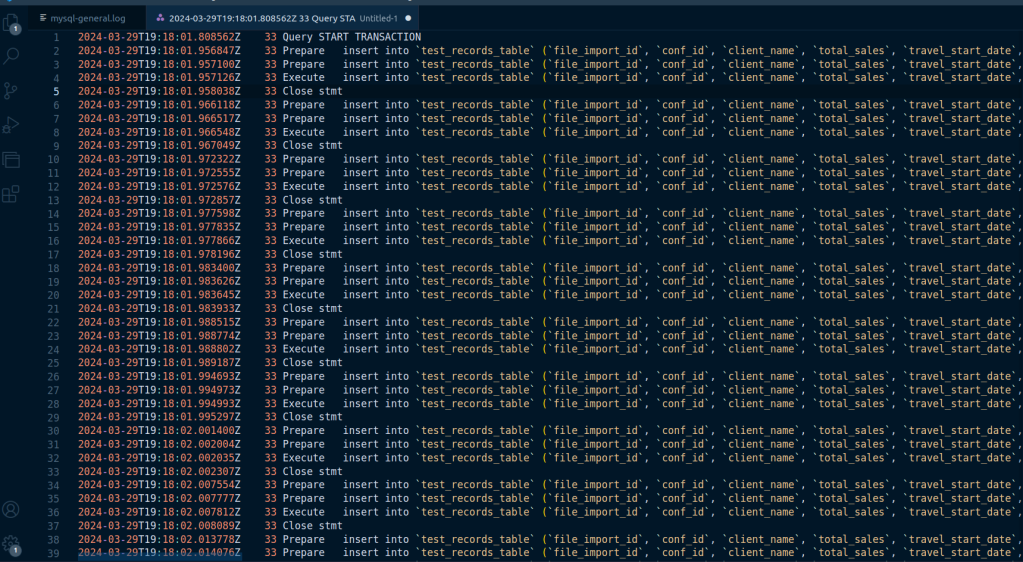

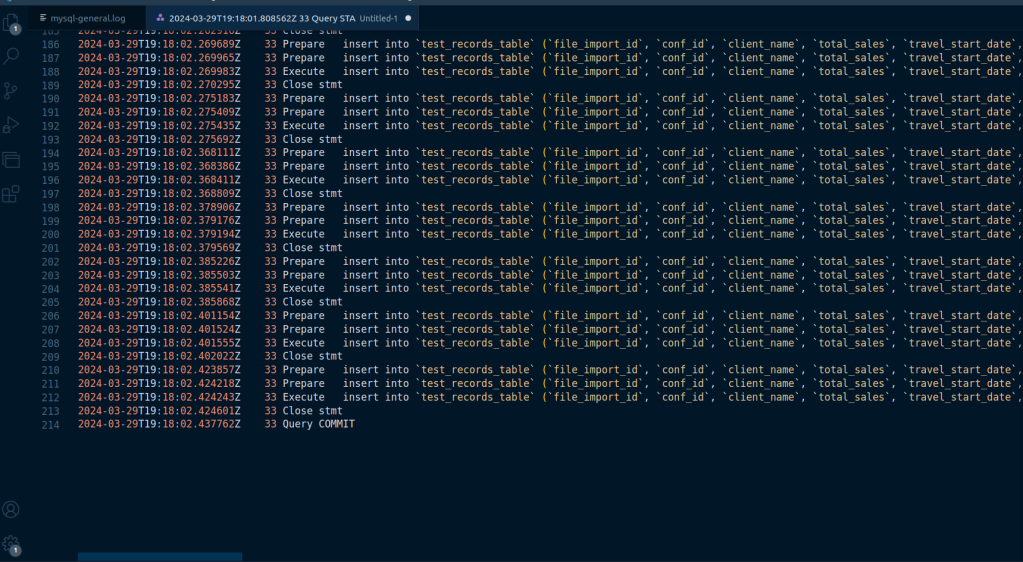

it is evident that each record from the dataset is being prepared and executed as an individual insert operation within a single transaction. This means that for each record, there’s a separate prepare and execute cycle, but all of these operations are encapsulated within a single transaction.

This behavior does not equate to batching in the sense of executing a single insert statement for multiple rows. Instead, each row is inserted individually, but the use of transactions likely helps in optimizing the process by ensuring that all inserts are either committed or rolled back together, which can improve performance and ensure data integrity.

So, while there isn’t a single insert query for multiple rows, the package is using transactions to group multiple insert operations together, which is a form of batch processing but on the transaction level rather than the insert statement level. This approach ensures that the database is not committed for every single insert operation, which would be significantly less efficient.

Here’s what I’ve managed to figure out, but I might be mistaken. If any of you have experience with this package and have attempted to grasp its logic, please share your insights.